Good read. Funny how I always thought the sensor read rgb, instead of simple light levels in a filter pattern.

You could see the little 2x2 blocks as a pixel and call it RGGB. It’s done like this because our eyes are so much more sensitive to the middle wavelengths, our red and blue cones can detect some green too. So those details are much more important.

A similar thing is done in jpeg, the green channel always has the most information.

This write-up is really, really good. I think about these concepts whenever people discuss astrophotography or other computation-heavy photography as being fake software generated images, when the reality of translating the sensor data with a graphical representation for the human eye (and all the quirks of human vision, especially around brightness and color) needs conscious decisions on how those charges or voltages on a sensor should be translated into a pixel on digital file.

Same, especially because I’m a frequent sky-looker but have to prepare any ride-along that all we’re going to see by eye is pale fuzzy blobs. All my camera is going to show you tonight is pale sprindly clouds. I think it’s neat as hell I can use some $150 binoculars to find interstellar objects, but many people are bored by the lack of Hubble-quality sights on tap. Like… Yes, and then sent a telescope to space in order to get those images.

That being said, I once had the opportunity to see the Orion nebula through a ~30" reflector at an Observatory, and damn. I got to eyeball about what my camera can do in a single frame with perfect tracking and settings.

Good post! Always nice to see actual technology on this sub.

How do you get a sensor data image from a camera?

Most cameras will let you export raw files, and a lot of phones do as well(although the phone ones aren’t great since they usually do a lot of processing on it before giving you the normal picture)

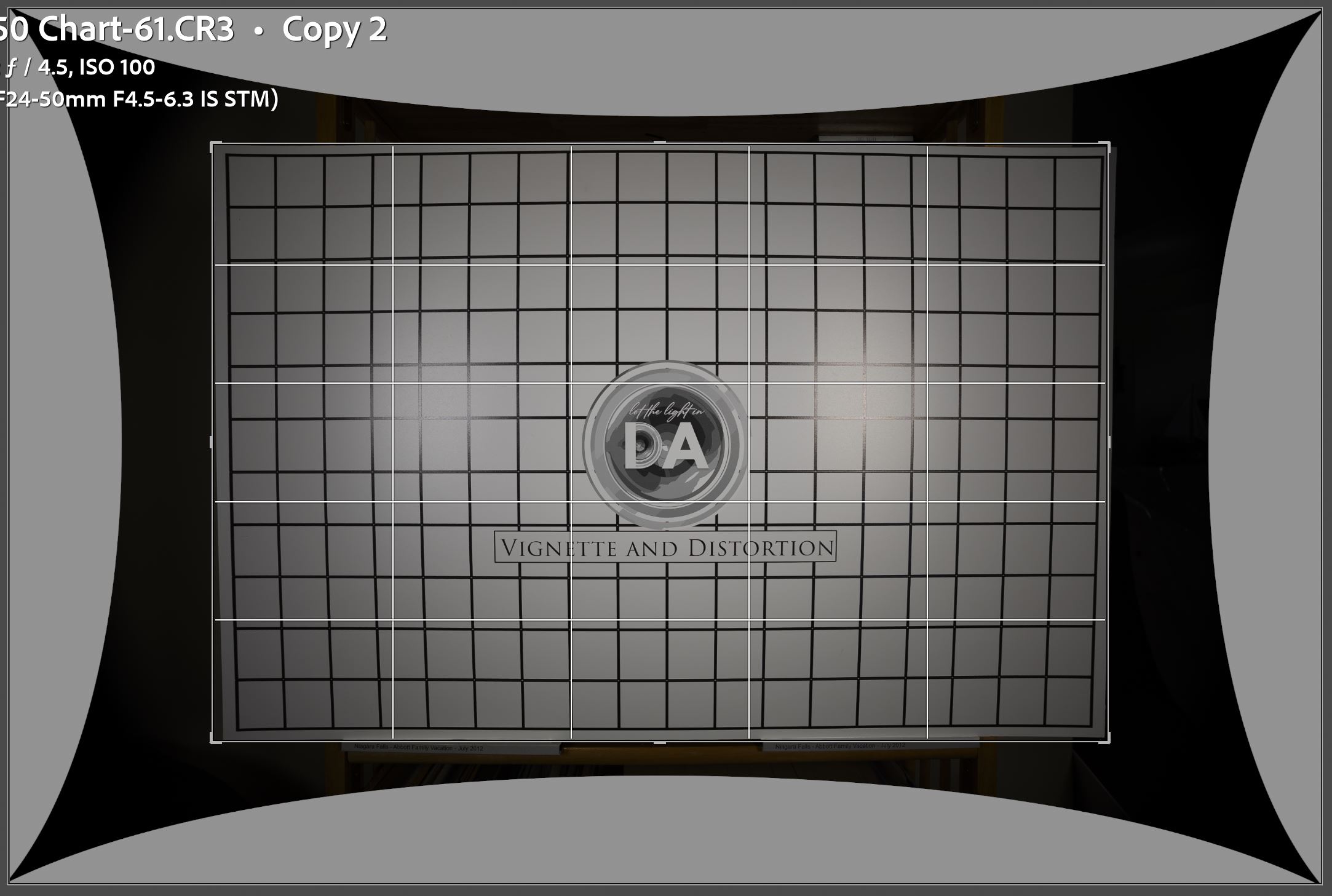

My understanding is that really raw phone data also have a lot of lens distortion, and proprietary code written by the camera brand has specific algorithms to undo that effect. And this is the part that phone tinkerers complain is not open source (well, it does lots of other things to the camera too).

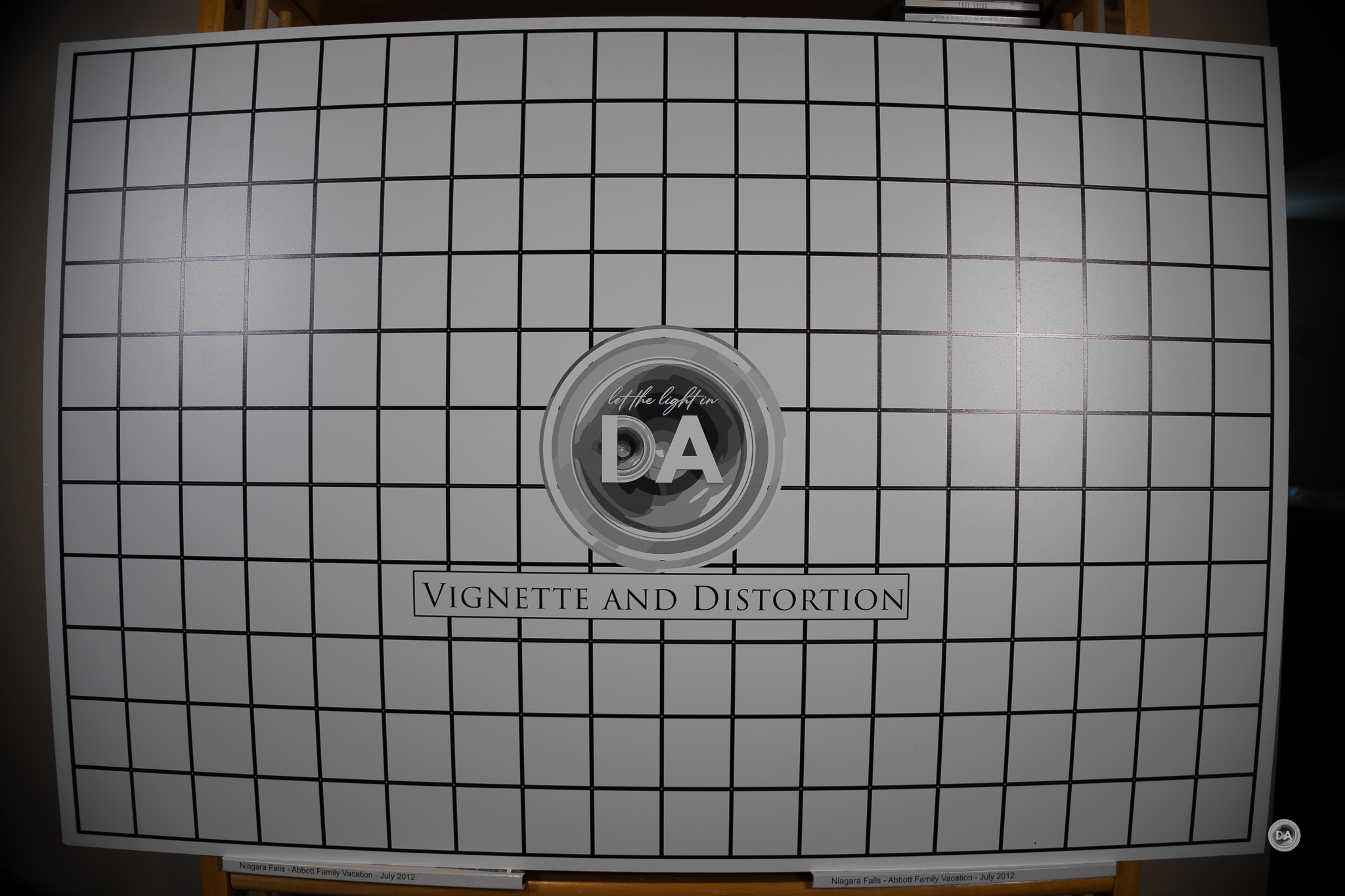

Modern mirrorless cameras do this too. For example, this is what my Canon kit lens looks like with/without digital barrel distortion correction:

Not my photos. From: https://dustinabbott.net/2024/05/canon-rf-s-18-45mm-f4-5-6-3-is-stm-review/

And https://dustinabbott.net/2024/04/canon-rf-24-50mm-f4-5-6-3-is-stm-review/

My own unprocessed RAWs are pretty wild. But (IMO) it’s a reasonable compromise to make lenses cheaper and better, outside of some ridiculous examples like the 24-50.

Not sure how worth mentioning it is considering how good the overall write up is.

Even though the human visual system has a non-linear perception of luminance. The camera data needs to be adjusted because the display has a non-linear response. The camera data is adjusted to make sure it appears linear to the displays face. So it’s display non-uniform response that is being corrected, not the human visual system.

There is a bunch more that can be done and described.

Added https://maurycyz.com/index.xml to my feed reader!

#nofilter

Fake dear head